The latest Azure certification exams are demanding and need a lot of demos and hands-on training. During the preparations and experiments, an e-commerce Azure Saas Platform was formed unintentionally.

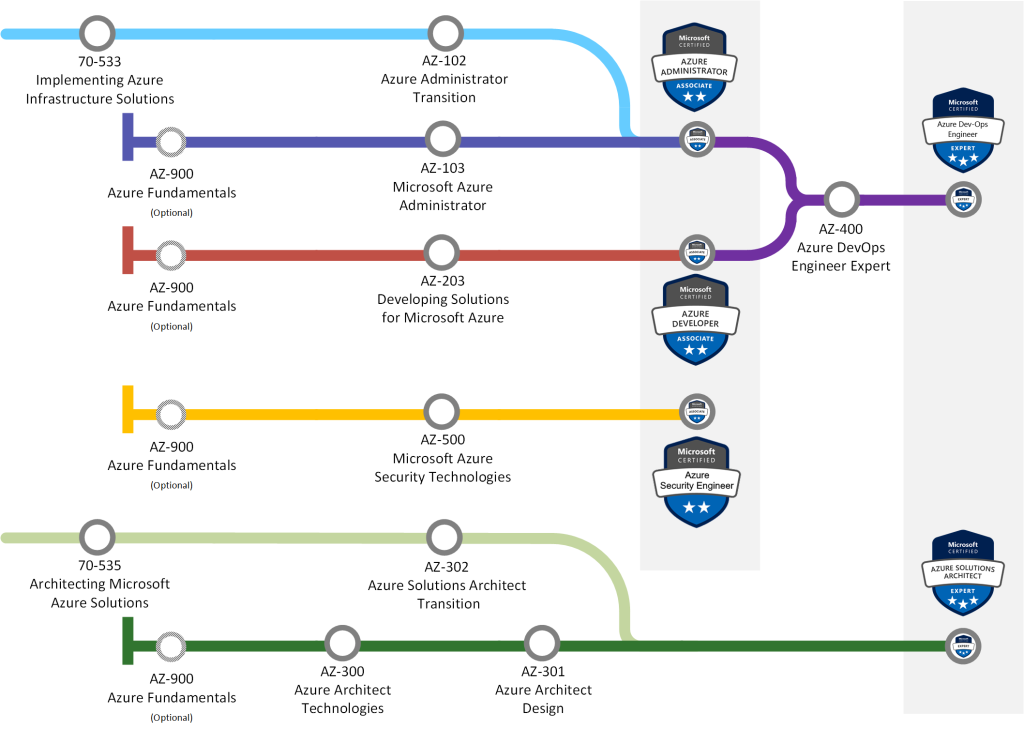

Taking Microsoft certification exams has been apart of my professional career since 2007. Certifying myself not only verifies the current level of my knowledge but also makes me study hard for newly available technology. I’m currently on the DevOps journey with the following exams:

- AZ900 Azure Fundamentals (Done)

- AZ-103 Azure Administrator Associate (Done)

- AZ-203 Azure Developer Associate (Done)

- AZ-400 Azure DevOps Engineer Expert (Studying at the moment)

Experiments turned to a SaaS platform

There are many guides and blog posts on how to study for exams and what materials to read. I find it best to read Microsoft documentation, create demos and have experiments. During the study period, my demos and architectural decisions became a fully functioning Azure SaaS platform. I’m still on the study path, so there is a room for upgrades and changes in the architectural decisions.

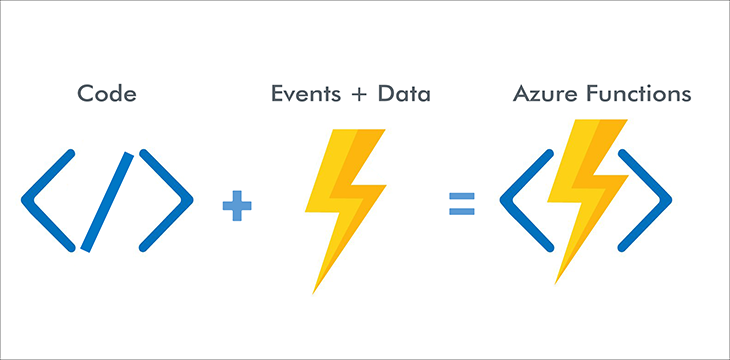

The demo I have created is an e-Commerce solution using Azure products and services, which are a part of measured skills in exams. To improve processes and add an extra layer to the intelligence of the application, Microsoft provides AI tools part of Microsoft Cognitive Services which I plan to include in the platform. The trial by applying AI services provided by Microsoft will also indicate the maturity of these services and a way to observe how enterprise solution-ready they are.

I have kept the Start-up mentality in mind by minimizing the costs of services on Azure as much as possible. The plan is also to include cost calculations about used services in my upcoming posts. The DevOps methodologies and tools will also be an active part of the process. DevOps helps to keep everything as simple as possible and automate the most processes as possible.

The SaaS platform is currently running on https://obrame.azurewebsites.net. “Obra” means “work” in Spanish, which is the current language of the service.

Upcoming blog posts will explain the processes of the SaaS solution. The posts will also go through important Azure services and their role in the technical implementation. The next blog post will explore the high-level architecture and initial services used to run the application in the Microsoft cloud.